Turbocharge Your Content with Sitecore AI Publishing V2

Published: 24 February 2026

If you have worked with Sitecore AI (XM Cloud), you already know:

Publishing performance directly impacts delivery speed and author productivity.

For a long time, Snapshot Publishing (V1) powered Sitecore AI content delivery. It worked but as projects scaled, publishing times increased, CM servers became overloaded, and queues slowed down deployments.

Now, Sitecore introduces a modern, cloud-native approach:

Experience Edge Runtime (Publishing V2)

Let’s explore what changed and why it matters.

The Bottleneck: Publishing V1 (Snapshot-Based)

In Publishing V1, when you publish a page, the CM server performs heavy processing before the content even reaches Experience Edge.

- Calculated the entire layout.

- Resolved all data sources.

- Executed Layout Service pipes.

- Created a massive, static JSON blob (a "Snapshot") to send to Experience Edge.

The Result: Publishing a simple page update could take minutes. Even a small content change requires rebuilding the entire page structure.

As your site grows:

- Layout complexity increases

- Datasource relationships multiply

- CPU usage on CM rises

- Publish queues grow longer

What once took seconds can quickly turn into minutes.

And at scale, this affects both publishing speed and authoring performance.

The Solution: Publishing V2 (Edge Runtime)

Sitecore AI introduced a new way to publish content: Publishing V2 (also known as Edge Runtime mode). It sounds technical, but it’s a beautiful simplification of how data moves.

Publishing V2 changes the architecture entirely.

Instead of pre-calculating the full layout JSON on the CM server, Sitecore now:

- Publishes raw item data

- Publishes layout references

- Defers JSON assembly to the Edge runtime

The final JSON response is assembled dynamically on the Experience Edge delivery layer not on CM.

This shifts the workload from the single CM instance to the massively scalable Edge CDN.

The "Cake" Analogy: V1 vs. V2

To understand the difference, imagine you are sending a cake to a friend.

Publishing V1 (Snapshot):

You bake the cake, frost it, box it up, and ship the entire heavy box.

Technical Translation: Sitecore calculates the entire layout JSON on the CM server and sends a massive static blob to Experience Edge.

Result: Slow and heavy.

If you change one ingredient, you must bake the whole cake again.

Publishing V2 (Edge Runtime):

You just send the recipe and the ingredients. Your friend (Experience Edge) assembles the cake instantly when someone asks for it.

Technical Translation: Sitecore sends only the raw item data and layout references. Experience Edge assembles the JSON at "runtime" when the API is called.

Result: Lightning fast.

You only ship the tiny changes.

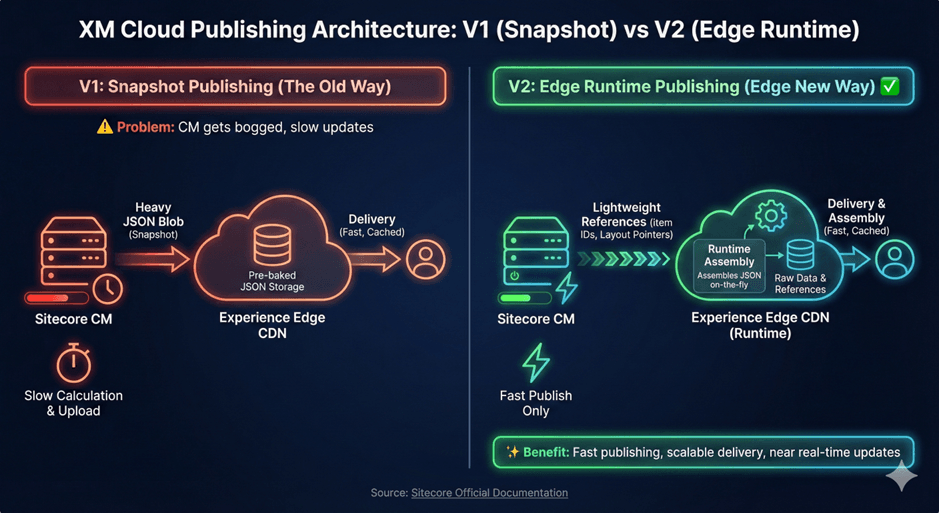

Architecture Comparison

V1 Flow (Snapshot Publishing)

CM → Layout Service Processing → Full JSON Snapshot → Experience Edge → CDN → FrontendEverything is assembled on CM before delivery.

V2 Flow (Edge Runtime)

CM → Raw Items + Layout References → Edge Worker Runtime → JSON Assembly → CDN → FrontendJSON is assembled at request time by the Edge worker.

Feature Comparison

| Feature | Publishing V1 (Snapshot) | Publishing V2 (Runtime) |

|---|---|---|

| Speed | Slow. Heavy calculation on CM because pre-calculates everything. | Fast. Minimal calculation. Because publishes only changed item references. |

| Payload | Heavy. Sends full JSON blobs. | Light. Sends references only. |

| Architecture | "Static Bundling" logic runs on CM. | "Dynamic Assembly" logic runs on Edge. |

| Staleness | High risk of publishing queues getting clogged. | Near real-time updates. |

| Complexity | Simple (All or Nothing). Like what you publish is what you get. | Strict. Must ensure related items (data sources) are published. Requires strict dependency awareness |

Key Advantages of Publishing V2

Blazing Fast Publish Times

Since layout JSON is no longer generated on CM, publish jobs typically complete in seconds rather than minutes.

This is especially noticeable on:

• Large component-based pages

• Sites with heavy personalization

• Complex layout structures- Better CM Performance

The CM instance is no longer CPU-bound by layout assembly.

This means:- Better authoring performance

- Higher concurrency

- Multiple publish jobs can run safely

- True Scalability

Processing shifts to the globally distributed Experience Edge runtime designed for high availability and horizontal scaling.

Your publishing performance now scales with Edge infrastructure, not your CM size.

Important Behavioral Change: Strict Dependencies

This is where V2 requires architectural awareness.

In V1:

• The snapshot captured fully resolved data at publish time.

• Previously resolved data could still exist inside snapshots.

In V2:

• JSON is assembled at request time.

• If a datasource item is not published, it does not exist in the GraphQL response.

This can result in:

• Empty components

• Missing navigation items

• Incomplete JSON output

Best Practice:

Always use:

• Publish Related Items

• Or ensure parent and datasource items are included in the publish job.

Caching Behavior in V2

V2 invalidates cache per item ID.

It does not automatically invalidate related items such as:

• Parent navigation

• Sibling teasers

• Shared components

If a shared data source changes, and parents are not published, you may see stale content.

Always plan publish dependency carefully.

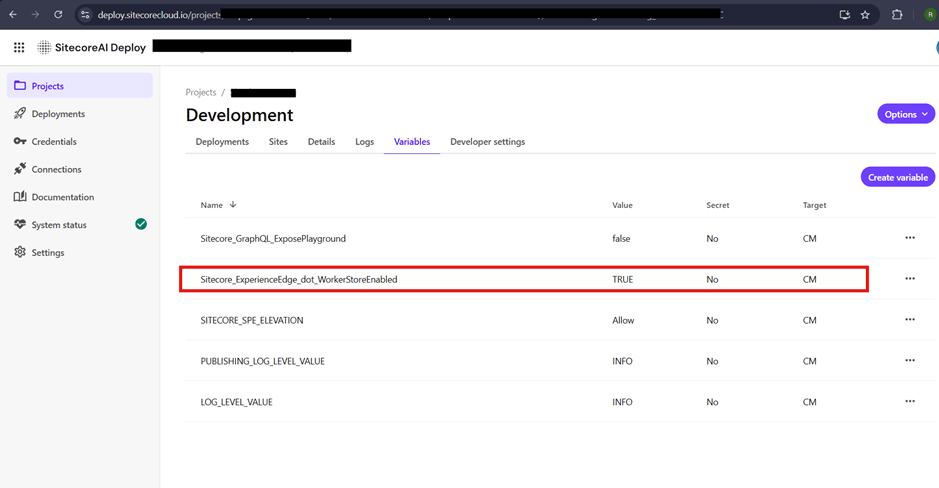

How to Implement (The "Switch")

No code changes required. No config patching. No redeployment of frontend.

You can do this directly in the Sitecore AI Deploy Portal

Step 1: Log in to the SitecoreAI Deploy.

Step 2: Select your Project and Environment (e.g., Production, QA, or Development).

Step 3: Go to the Variables tab.

Step 4: Click Create Variable and add the following:

• Name: Sitecore_ExperienceEdge_dot_WorkerStoreEnabled

• Value: TRUE

• Target: CM

Step 5: Save and deploy your environment.

Step 6: Once deployment completes, Republish all sites in the environment.

Important: Deployment + full republish is required for activation.

To revert to V1:

• Change the variable Sitecore_ExperienceEdge_dot_WorkerStoreEnabled to FALSE.

• Redeploy the environment

• Delete Edge content

• Republish all sites

Frontend Impact: The Best News

This is the "sweetest" part of the update.

Do not need to change your Next.js API endpoints.

Even though the backend architecture has completely changed, the Frontend Contract remains the same.

- Your Endpoint: Remains https://edge.sitecorecloud.io/api/graphql/v1

- Your Query: Remains exactly the same.

- Your JSON Response: Look exactly the same.

- Require zero refactoring

Why?

The change from V1 to V2 is purely architectural (Backend/Ingestion). It changes how data gets into the Edge, but it does not change Delivery how read it out.

- V1: Edge reads a pre-baked JSON blob.

- V2: Edge reads references and assembles the JSON blob on the fly.

Real-World Impact

In enterprise implementations, teams have observed:

• Homepage publish time reduced from minutes to seconds

• Massive reduction in publish queue backlogs

• Improved CM responsiveness during large content pushes

For content-heavy, composable architectures, this is a game-changing improvement.

Final Verdict

Publishing V2 (Experience Edge Runtime):

✔ Faster

✔ Lighter

✔ More scalable

✔ No frontend changes

✔ Cleaner architecture

The only requirement?

Be disciplined with publish dependencies.

For most Sitecore AI headless projects, this is a clear upgrade and a rare “win-win” architectural improvement.

Official Documentation

Ready to make the switch? Check out the official guides here:

• Sitecore: Enable Edge Runtime Publishing

• Understanding Publishing Architecture

Closing Thought

Sitecore AI is evolving toward true cloud-native architecture and Publishing V2 is a major step forward.

If you are still on Snapshot Publishing, this is the time to switch.

Happy Publishing.

Roshan Ravaliya

Software Developer – Sitecore & .NET

Roshan is a Sitecore and .NET developer at Addact, specializing in Sitecore XM Cloud, Sitecore XP, and SitecoreAI-driven implementations. He works with .NET Core, C#, Azure Functions, and Next.js to deliver scalable, cloud-enabled, and performance-focused digital solutions.